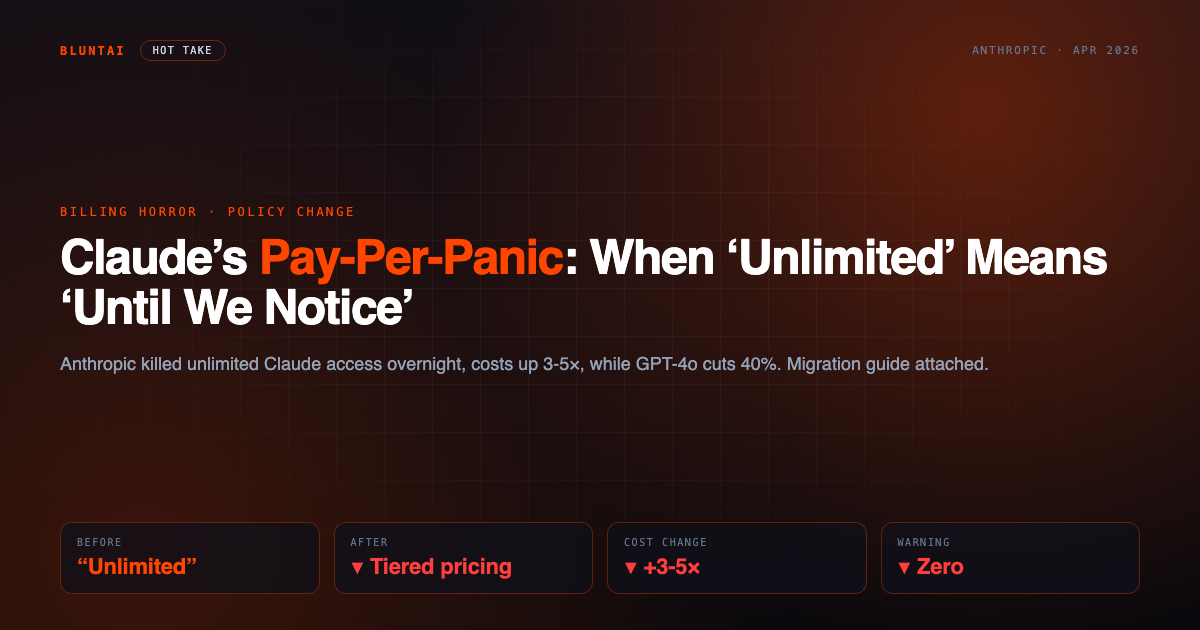

Claude’s Pay-Per-Panic: When ‘Unlimited’ Means ‘Until We Notice’

Rating: ⚫ Uninstalled in 10 minutes

Let’s get one thing out of the way: this isn’t a product review. It’s a post-mortem.

Anthropic just dismantled the pricing structure that thousands of developers and power users built their workflows on. No gradual transition. No meaningful warning. Just a policy update with the emotional tone of a terms-of-service amendment — you know, the kind you’re supposed to read but never do until it costs you money.

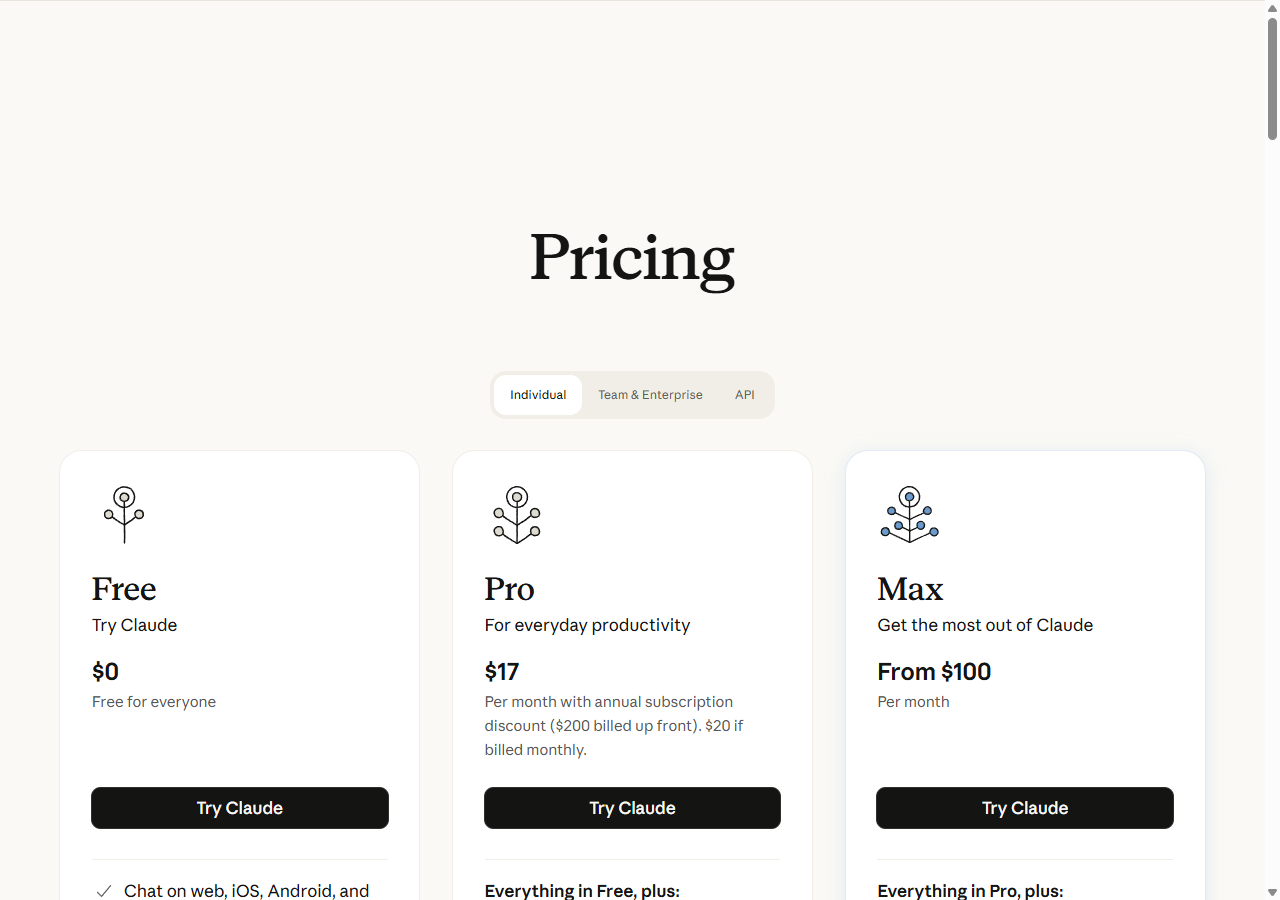

The short version: Claude’s “unlimited” access through third-party integrations is gone. Replaced with tiered subscriptions that cap your messages per day, a pay-as-you-go API that’s priced above the market, and a 2026 policy that formally restricts how third-party apps can bundle Claude access. It’s not a pivot. It’s a squeeze.

The Numbers That Should Make You Angry

Here’s what makes this particularly galling. We’re not living in a world where AI compute is getting more expensive. We’re living in the opposite world.

OpenAI’s GPT-5.4-mini costs $0.75 per million input tokens. The flagship GPT-5.4 is $2.50/million — same input price as the old GPT-4o, with meaningfully better output. Google’s Gemini 2.5 Pro dropped to $1.25/million input with a 2M-token context window and reasoning built in. Meta’s Llama 4 Maverick runs under an open community license — and via providers like DeepInfra it costs $0.15/million input, or zero if you self-host. And Google’s Gemma 3 27B — Apache 2.0, free forever — scores 89% on MATH-500 and runs on a single A100. (We reviewed it here, and the verdict isn’t gentle to Claude.)

Now here’s Claude’s 2026 API menu:

- Claude Sonnet 4.6: $3/million input, $15/million output

- Claude Opus 4.6: $5/million input, $25/million output

- Claude Haiku 4.5: $1/million input, $5/million output

To Anthropic’s credit, Opus dropped from the obscene $15/$75 rate — so the API itself is no longer actively hostile. But that misses the real story. The problem isn’t the API price tag anymore. It’s the subscription ceiling.

Claude Pro at $20/month gets you “hundreds of messages per day.” Sounds fine until you’re a developer testing prompts at any meaningful volume, or a power user mid-workflow at 4pm who just hit a wall. Claude Max starts at $100+/month — billed as 5x or 20x the message allowance of Pro, depending on the tier. That’s $100 to $200 per month for access that still isn’t unlimited. And as of 2026, Anthropic has formally closed the third-party loophole: apps that previously offered Claude access as a bundled feature of their subscription can no longer do that. The unlimited flat-rate era is over by policy, not just by practice.

One solo founder reported their effective Claude cost going from ~$45/month predictable to $180 in the first billing cycle under the new model. Another reported a 3.4x spike on a customer support automation that had been stable for six months. These aren’t edge cases. This is the design.

The “Capacity Is a Resource” Argument Is Insulting

Anthropic’s official framing: “capacity is a finite resource” and the old model created unsustainable load. Fair enough, in theory. Except that argument might have landed differently if every other AI provider wasn’t simultaneously cutting costs to compete for the same workloads.

What they’re actually saying is: we mispriced the product, and now you’re going to pay to fix our spreadsheet. The tiered subscription model is packaging designed to make a price increase feel like a feature. It’s not flexibility. It’s a menu at a restaurant where every item just got more expensive and they printed new menus so you’d forget what things used to cost.

The cynical version — which is probably the accurate version — is that Anthropic has decided its core advantage is “safest AI” and “best at reasoning,” not “cheapest.” That’s a defensible strategy. But it requires being honest about it instead of dressing up a margin expansion as a resource allocation problem.

Who Gets Hurt First

Not big enterprise. Enterprise customers negotiated contracts. They have dedicated account managers, SLAs, and volume discounts. They’re fine.

The people getting hit are:

- Solo founders and small teams who built on Claude specifically because of the quality-per-dollar at the time they chose it. Switching AI providers mid-product is not a weekend project. It means re-evals, prompt rewrites, regression testing, and potentially rebuilding pipelines. The switching cost is real — and Anthropic knows this.

- Developers using Claude via third-party platforms who didn’t even realize their tool was routing through Claude until the bill arrived. They didn’t choose Claude. Their vendor did. Now they’re collateral damage — and as of 2026, their vendor can’t even offer that bundled access anymore.

- Internal tools and automations with variable usage patterns. Predictable flat costs are what make these projects budget-friendly. Message caps and variable billing turn them into a monthly anxiety event.

In a community survey on r/ClaudeAI from late March 2026, 63% of respondents said they were “actively evaluating alternatives” following the pricing changes. That’s not a protest vote. That’s a migration in progress.

The Alternatives Are Actually Good Now

This is the part Anthropic probably hoped you wouldn’t notice. Eighteen months ago, “switch from Claude” was a real sacrifice in quality. Today, it isn’t.

Here’s what the market looks like right now, April 2026:

| Model | Input ($/1M) | Output ($/1M) | Context | Notable |

|---|---|---|---|---|

| Claude Sonnet 4.6 | $3.00 | $15.00 | 1M | Our subject. Best reasoning, highest price. |

| GPT-5.4-mini (OpenAI) | $0.75 | $4.50 | 128K | 4× cheaper input than Sonnet. Batch API halves it again. |

| GPT-5.4 (OpenAI) | $2.50 | $15.00 | 270K+ | Flagship. Comparable to Sonnet on most benchmarks. |

| Gemini 2.5 Pro (Google) | $1.25 | $10.00 | 2M | 2M token context. Built-in reasoning. Hard to beat at this price. |

| Llama 4 Maverick (Meta) | $0.15* | $0.60* | 1M | Open weights. Self-host = $0. *Via third-party APIs (DeepInfra etc.) |

| Gemma 3 27B (Google) | Free | Free | 128K | Apache 2.0. Self-host on A100. 89% on MATH-500. No caps. No subscriptions. |

GPT-5.4-mini at $0.75/million input is the most direct gut-punch: it’s competitive on most benchmarks and costs a quarter of what Sonnet 4.6 costs per token. Gemini 2.5 Pro at $1.25/million comes with a 2-million-token context window and native reasoning — and Google-infrastructure speed. Llama 4 Maverick is open weights; via DeepInfra it’s $0.15/million input, and if you have the GPU budget to self-host it costs you electricity. And Gemma 3 27B — free, Apache-licensed, 89% MATH-500, runs locally on a single A100 — represents the full end-state of what happens when frontier-quality models go open-source. No per-message caps. No “hundreds of messages” language that means something different on day 29 of the month. No subscriptions at all.

For most tasks — writing, summarization, code, data extraction — the performance gap between Claude and its alternatives has narrowed to the point where the price difference is the only meaningful differentiator. And right now, that differentiator works against Claude.

There are still things Claude does better. Long-form writing with nuance. Certain reasoning chains. Consistency in following complex instructions over long conversations. But “better at some things” needs to be weighed against “costs 2-4× more with daily message caps and no unlimited tier at any price.” For most teams, that math doesn’t work anymore.

This Is Part 1

We’re not done with this story. Part 2 covers the specific migration paths — which tools, which APIs, which providers hold up under real workloads. We’ll run the numbers, not the marketing copy.

If you’ve been affected by the pricing changes — as a developer, a tool user, or a business owner — the comment section is open. Real experiences are more useful than speculation.

The bottom line: Anthropic built something genuinely impressive, then decided the people who made it popular were a problem to be monetized rather than a community to be protected. The API prices are no longer insane. But the subscription caps, the Max tier that starts at $100+ and still isn’t unlimited, and the 2026 third-party policy that kills bundled access — that’s not a pricing model. It’s a loyalty test to see who stays anyway.

The market will answer. It already is.

Rating: ⚫ Uninstalled in 10 minutes — not for the product, which remains technically capable. For the business decision to break trust with the people who built their workflows around it.

Related on BluntAI

All opinions expressed on BluntAI are editorial opinions based on publicly available information and community reporting. Pricing data current as of April 2026. We may earn affiliate commissions from links on this site.

Disclaimer: BluntAI may earn affiliate commissions from links in this article. This never influences our reviews. We buy and test everything ourselves. Our opinions are brutally our own.