A 20-Year-Old Dropout Built the Memory Layer Your AI Agent Is Missing

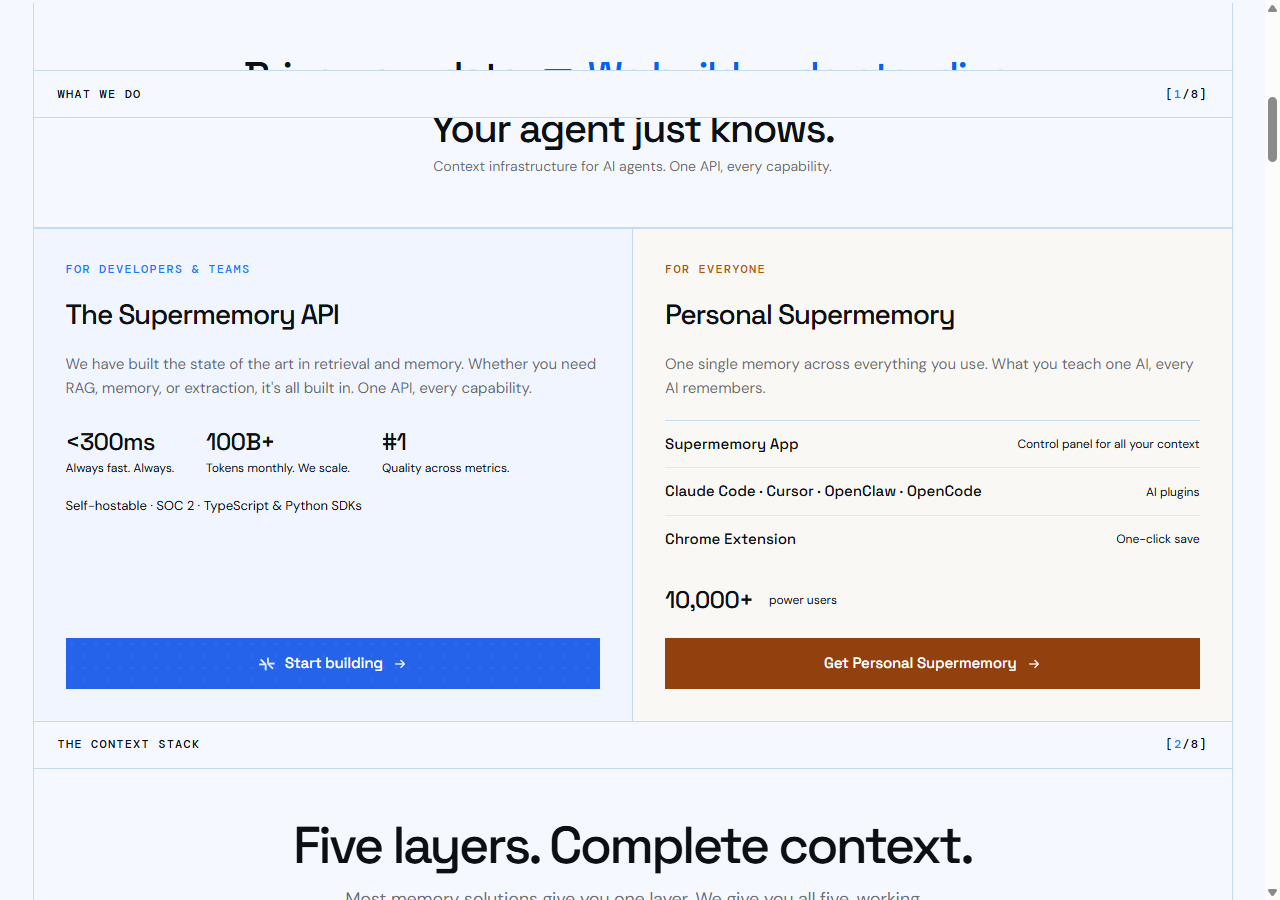

What It Actually Does

Strip away the founder story: Supermemory is a managed memory layer for AI applications. You feed it user interactions, it figures out what’s worth remembering, and it surfaces relevant context when the LLM needs it.

The key word is “figures out.” Most memory solutions are glorified vector databases — they store everything and hope cosine similarity finds the right chunk. Supermemory claims to be smarter about what to keep, what to forget, and what to surface when.

In production, they’re processing 100 billion tokens per month with sub-300ms recall. That’s not a demo number. That’s real infrastructure under real load.

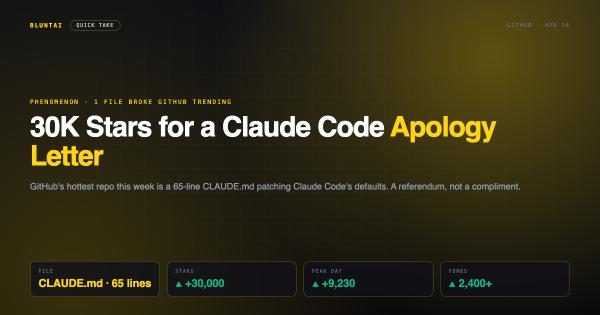

They recently shipped a Claude Code plugin too — persistent memory across coding sessions via MCP. If you’ve ever been annoyed that Claude forgets your project context every conversation, that’s the use case.

The Numbers (Verified)

LongMemEval benchmark: 85.2% in production. For context, Zep gets 71.2% on the same benchmark. Mem0 is somewhere in that range too, though good luck getting a straight comparison — everyone in this space accuses everyone else of cooking their benchmarks.

There’s also an experimental system called ASMR (Agentic Search and Memory Retrieval) that hit 98.6% — without a vector database. That’s impressive if it ships. It hasn’t yet.

Recall speed: Sub-300ms. Zep takes about 4 seconds. Mem0 takes 7-8 seconds. This is Supermemory’s strongest differentiator and it’s not close.

Production scale: 5+ billion tokens daily across enterprise customers. They had a database incident in March 2026 from scaling too fast — API key tracking queries were hammering their DB on every authenticated request. Fixed quickly, but it tells you they’re growing faster than their infrastructure sometimes handles.

The Founder Story (Because It’s Genuinely Wild)

Shah built a tweet-to-image tool before college that got acquired by Hypefury. Used the money to move to the US. Started Supermemory as a side project to chat with your Twitter bookmarks. Dane Knecht, Cloudflare’s CTO, saw it and told him to productionize it.

The OpenClaw draft said he “worked at Mem0 and quit to build a competitor.” That’s not quite right. He had early exposure to the embedchain.ai team (Mem0’s previous name), but calling it employment overstates it. The competitive dynamics are real — Supermemory and Mem0 are absolutely going after the same market — but the “quit and competed” narrative is cleaner than the truth.

The investor list is legitimate though: Jeff Dean (Google’s AI chief), Dane Knecht (Cloudflare CTO), Logan Kilpatrick (DeepMind PM), David Cramer (Sentry founder). When Jeff Dean writes you a check, people notice.

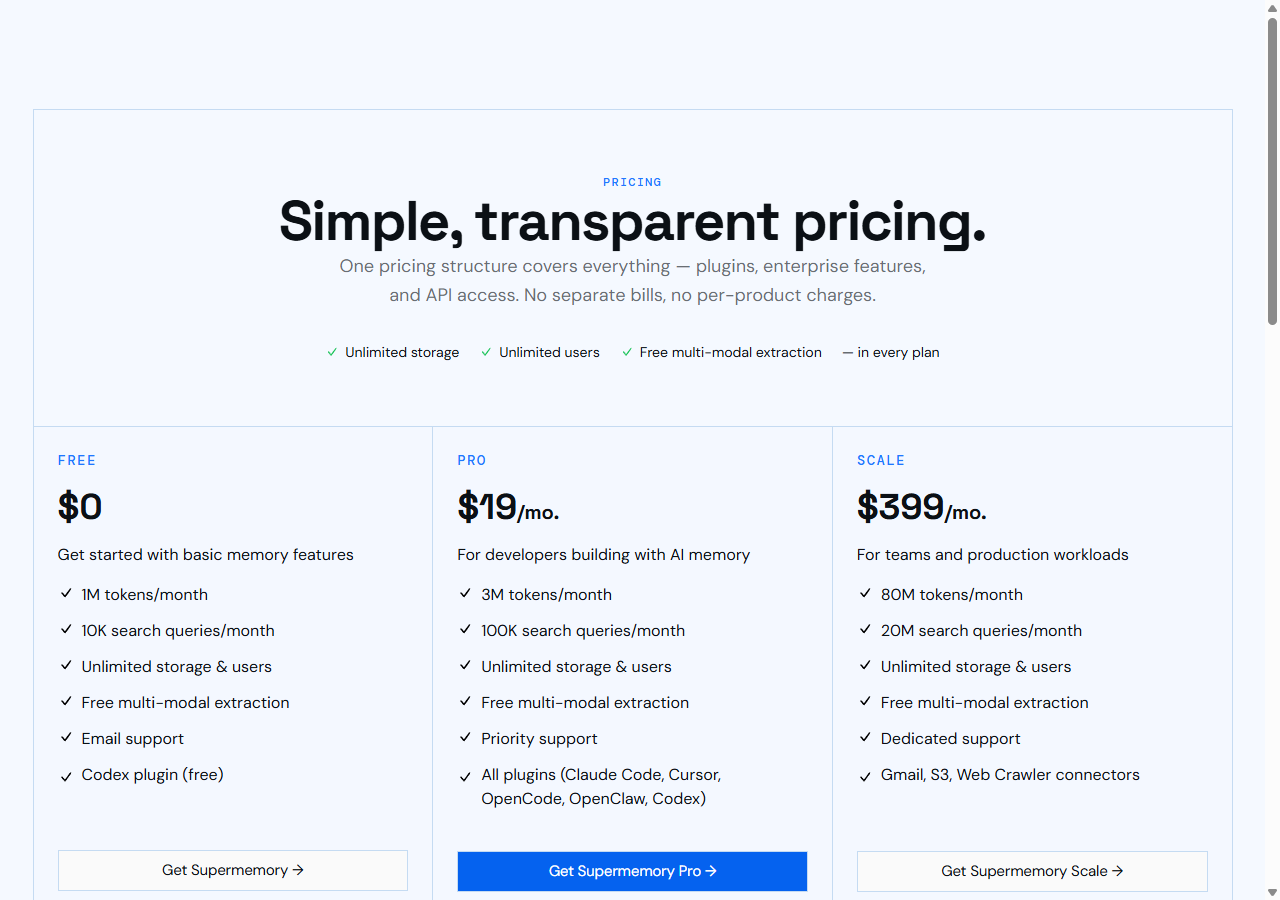

Pricing: The Cliff Problem

– Pro: $19/month — 3M tokens, 100K search queries

– Scale: $399/month — 80M tokens, 20M search queries

– Overage: $0.01 per 1,000 tokens

The jump from $19 to $399 is brutal. There’s no middle tier. If you’re an indie developer who outgrows Pro, you’re suddenly paying enterprise rates. That’s either a deliberate qualification filter or a pricing gap they haven’t bothered to fix. Either way, plan your budget.

Self-hosting is technically available but enterprise-only. The open source parts are plugins and integrations, not the core engine. Don’t confuse “open source MCP plugin” with “open source product.”

How It Stacks Against Mem0 and Zep

| Supermemory | Mem0 | Zep | |

|---|---|---|---|

| Recall speed | <300ms | 7-8s | ~4s |

| LongMemEval | 85.2% | ~75% | 71.2% |

| GitHub stars | ~17K | ~50K | ~25K |

| Approach | Auto-capture, user-centric | Fine-grained control, team-centric | Graph-based |

| Self-host | Enterprise only | Open source core | Open source |

Mem0 has 3x the GitHub stars and an open source core you can actually self-host. If you want control, Mem0 wins. If you want speed and don’t want to think about memory management, Supermemory wins. Zep is solid but slower than both.

The benchmark wars in this space are tiresome. Mem0 and Zep have publicly accused each other of gaming evaluations. Supermemory’s numbers look real but treat every benchmark in the memory space with a healthy dose of skepticism.

Bottom Line

Supermemory solves a real problem — AI agents that actually remember things — and solves it faster than the competition. The production numbers are legitimate, the investors are serious, and the recall speed advantage is significant.

The concerns are familiar startup ones: pricing gap between tiers, “self-hostable” claims that don’t hold up for non-enterprise users, and a founder story that’s been slightly mythologized. None of these are dealbreakers.

If you’re building AI products that need persistent memory across sessions, Supermemory is worth evaluating against Mem0. Speed vs control — pick your priority.

Rating: Solid, no drama. It does what it says, faster than the alternatives. Just budget for that $399 cliff if you’re planning to scale.

Related on BluntAI

All opinions expressed on BluntAI are editorial opinions based on publicly available information and personal testing. We may earn affiliate commissions from links on this site.

Disclaimer: BluntAI may earn affiliate commissions from links in this article. This never influences our reviews. We buy and test everything ourselves. Our opinions are brutally our own.